行動應用程式和架構的非同步性質,往往會導致難以編寫可靠且可重複的測試。當使用者事件遭到插入時,測試架構必須等待應用程式完成對事件的回應,這可能包括變更螢幕上的部分文字,或完全重建活動。如果測試沒有確定行為,就會出現不穩定的情況。

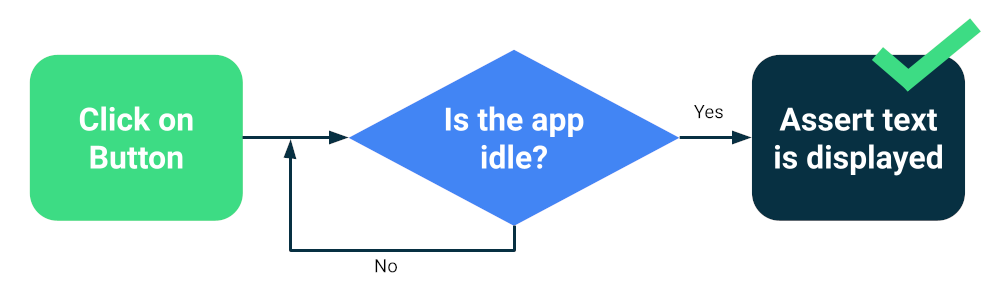

Compose 或 Espresso 等新式架構的設計理念是為了測試,因此可確保在下一個測試動作或斷言之前,UI 會處於閒置狀態。這就是同步處理。

測試同步處理

執行測試未知的非同步或背景作業時,仍可能發生問題,例如從資料庫載入資料或顯示無限動畫。

為提高測試套件的可靠性,您可以安裝可追蹤背景作業的方式,例如 Espresso 閒置資源。此外,您也可以為測試版本取代模組,以便查詢閒置狀態或改善同步處理,例如協同程式的 TestDispatcher 或 RxJava 的 RxIdler。

提升穩定性的方法

大規模測試可同時找出許多迴歸問題,因為這類測試會檢測應用程式的多個元件。這類測試通常會在模擬器或裝置上執行,因此精確度很高。雖然大型端對端測試可提供全面的涵蓋率,但更容易發生偶發性失敗。

您可以採取以下主要措施來減少不穩定性:

- 正確設定裝置

- 避免同步處理問題

- 實作重試

如要使用 Compose 或 Espresso 建立大型測試,通常會啟動其中一個活動,並以使用者的方式導覽,藉由斷言或螢幕截圖測試驗證 UI 是否正常運作。

其他架構 (例如 UI Automator) 可讓您與系統 UI 和其他應用程式互動,因此範圍更廣泛。不過,UI Automator 測試可能需要更多手動同步作業,因此可靠性較低。

設定裝置

首先,為了提高測試的可靠性,您必須確保裝置的作業系統不會意外中斷測試執行作業。例如,當系統更新對話方塊顯示在其他應用程式上層,或是磁碟空間不足時。

裝置農場供應商會設定裝置和模擬器,因此您通常不需要採取任何行動。不過,這些類別可能會針對特殊情況提供專屬的設定指示。

Gradle 管理的裝置

如果您自行管理模擬器,可以使用 Gradle 管理的裝置定義要用來執行測試的裝置:

android {

testOptions {

managedDevices {

localDevices {

create("pixel2api30") {

// Use device profiles you typically see in Android Studio.

device = "Pixel 2"

// Use only API levels 27 and higher.

apiLevel = 30

// To include Google services, use "google".

systemImageSource = "aosp"

}

}

}

}

}

在這個設定下,下列指令會建立模擬器映像檔、啟動執行個體、執行測試,然後關閉執行個體。

./gradlew pixel2api30DebugAndroidTest

Gradle 管理的裝置包含機制,可在裝置中斷連線時重試,並提供其他改善功能。

避免同步處理問題

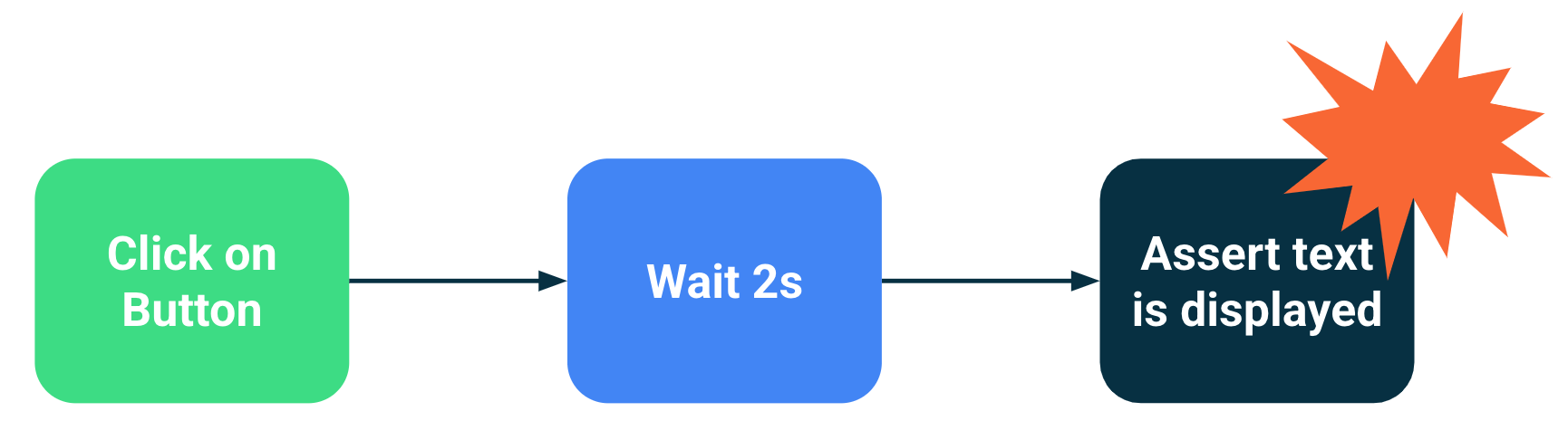

執行背景或非同步作業的元件可能會導致測試失敗,因為測試陳述式是在 UI 準備就緒前執行。測試範圍越廣,出現不穩定的可能性就越高。這些同步問題是導致不穩定的主要原因,因為測試架構需要推斷活動是否已完成載入,或是否應等待更長的時間。

解決方案

您可以使用 Espresso 的閒置資源,指出應用程式何時處於忙碌狀態,但很難追蹤每個非同步作業,尤其是在非常大的端對端測試中。此外,如果不想讓測試中的程式碼受到影響,就很難安裝空轉資源。

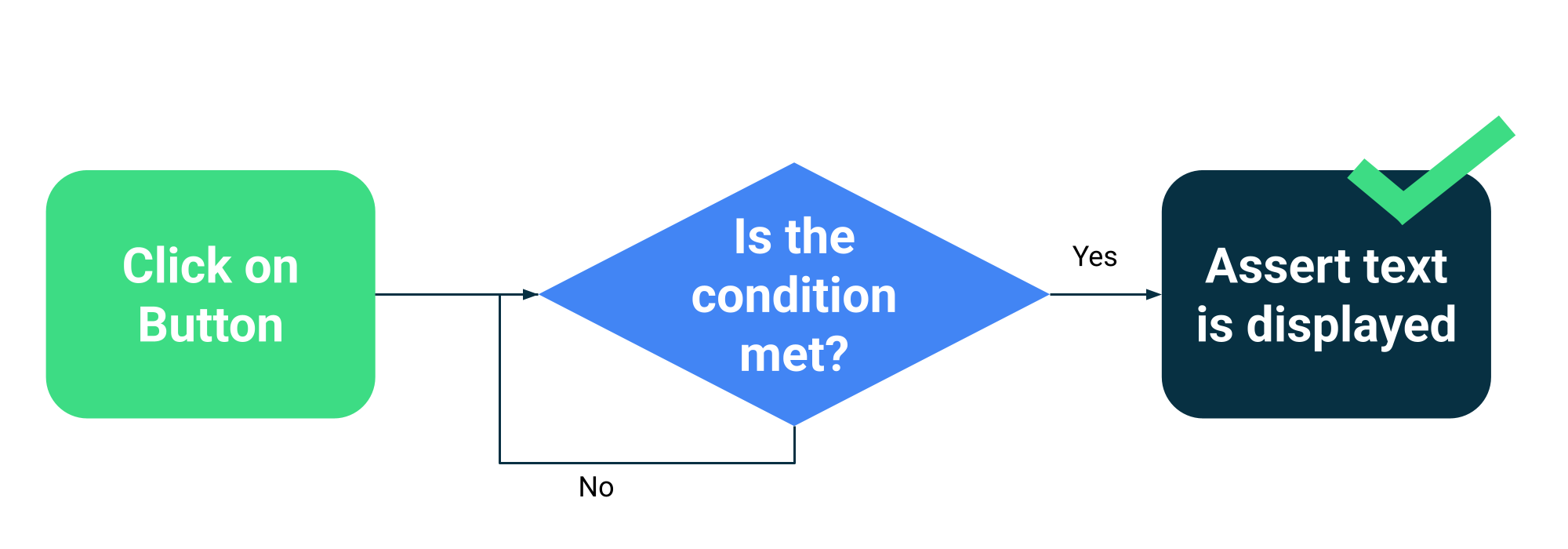

您可以讓測試等待特定條件滿足,而非估算活動是否忙碌。例如,您可以等待特定文字或元件顯示在 UI 中。

Compose 會在 ComposeTestRule 中收集測試 API,以便等待不同的比對器:

fun waitUntilAtLeastOneExists(matcher: SemanticsMatcher, timeout: Long = 1000L)

fun waitUntilDoesNotExist(matcher: SemanticsMatcher, timeout: Long = 1000L)

fun waitUntilExactlyOneExists(matcher: SemanticsMatcher, timeout: Long = 1000L)

fun waitUntilNodeCount(matcher: SemanticsMatcher, count: Int, timeout: Long = 1000L)

以及可接受任何傳回布林值的函式的一般 API:

fun waitUntil(timeoutMillis: Long, condition: () -> Boolean): Unit

使用範例:

composeTestRule.waitUntilExactlyOneExists(hasText("Continue")</code>)</p></td>

重試機制

您應該修正不穩定的測試,但有時導致測試失敗的條件非常罕見,因此很難重現。雖然您應該隨時追蹤並修正不穩定的測試,但重試機制可執行多次測試,直到測試通過為止,有助於維持開發人員的工作效率。

重試必須在多個層級執行,才能避免發生問題,例如:

- 連線到裝置的逾時或連線中斷

- 單一測試失敗

安裝或設定重試機制取決於您的測試架構和基礎架構,但常見機制包括:

- 重試任何測試的次數的 JUnit 規則

- 持續整合工作流程中的重試動作或步驟

- 系統會在無回應時重新啟動模擬器,例如 Gradle 管理的裝置。