Android 6.0(M)は、ユーザーとアプリ デベロッパーに新しい機能を提供します。このドキュメントでは、いくつかの注目すべき API の概要について説明します。

開発する

Android 6.0 対応アプリの開発を始めるには、まず Android SDK を入手する必要があります。次に、SDK Manager を使用して Android 6.0 SDK プラットフォームとシステム イメージをダウンロードします。

対象 API レベルを更新する

Android を搭載しているデバイス向けにアプリを最適化するには、targetSdkVersion を "23" に設定し、Android システム イメージにアプリをインストールした後、この変更を加えたアップデート済みのアプリを公開します。

Android API を使用しながら旧バージョンも同時にサポートするには、minSdkVersion でサポートされていない API を実行する前に、システムの API レベルをチェックする条件をコードに追加します。下位互換性の維持について詳しくは、異なるプラットフォーム バージョンのサポートをご覧ください。

API レベルの仕組みについては、API レベルとはをご覧ください。

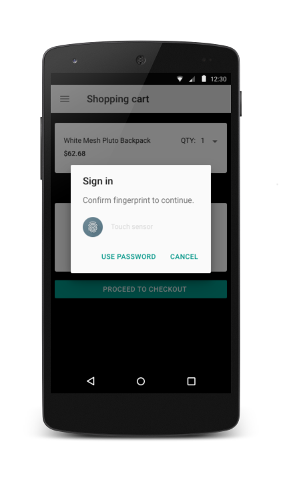

指紋認証

このリリースでは、対象端末での指紋スキャンを使用したユーザー認証を可能にする新しい API が提供されています。これらの API は、Android キーストローク システムと共に使用します。

指紋スキャンでユーザーを認証するには、新しい FingerprintManager クラスのインスタンスを取得して、authenticate() メソッドを呼び出します。アプリが、指紋センサー付きの互換性のあるデバイスで実行されている必要があります。指紋認証フローのユーザー インターフェースをアプリに実装し、Android 標準の指紋アイコンを UI に使用する必要があります。Android 指紋アイコン(c_fp_40px.png)は、生体認証サンプルに含まれています。指紋認証を使用するアプリを複数開発する場合は、各アプリで個別にユーザーの指紋を認証する必要があります。

アプリでこの機能を使用するには、まずマニフェストに USE_FINGERPRINT 権限を追加します。

<uses-permission android:name="android.permission.USE_FINGERPRINT" />

アプリでの指紋認証の実装については、生体認証のサンプルをご覧ください。これらの認証 API を他の Android API とともに使用する方法については、 指紋と支払いの API のビデオをご覧ください。

この機能をテストする場合は、次のステップに従います。

- Android SDK Tools Revision 24.3 をインストールします(まだインストールしていない場合)。

- エミュレータに新しい指紋を登録します。[設定] > [セキュリティ] > [指紋認証] に移動し、登録手順に沿って操作します。

- エミュレータを使って、次のコマンドで指紋のタッチイベントをエミュレートします。同じコマンドを使って、ロック画面やアプリでの指紋のタッチイベントをエミュレートします。

adb -e emu finger touch <finger_id>

Windows では、

telnet 127.0.0.1 <emulator-id>の後にfinger touch <finger_id>を実行する必要がある場合があります。

認証情報の確認

アプリで、ユーザーがいつ、最後に端末のロックを解除したかに基づいて、ユーザーを認証できます。この機能によって、ユーザーがアプリ固有のパスワードを覚えたり、開発者が独自の認証ユーザー インターフェースを実装したりする必要性がなくなります。アプリでこの機能を使用する場合は、ユーザー認証用の公開鍵または秘密鍵の実装も組み合わせる必要があります。

ユーザーが正常に認証された後、同じキーを再使用できるタイムアウト期間を設定するには、KeyGenerator または KeyPairGenerator をセットアップした後、新しい setUserAuthenticationValidityDurationSeconds() メソッドを呼び出します。

再認証ダイアログを過度に表示しないようにします。アプリでは、まず暗号オブジェクトを使用し、タイムアウトした場合は createConfirmDeviceCredentialIntent() メソッドを使用してユーザーをアプリ内で再認証するようにします。

アプリのリンク

このリリースでは、アプリのリンク機能を強化することで Android のインテント システムが強化されました。この機能では、所有するウェブ ドメインにアプリを関連付けることができます。この関連付けに基づいて、プラットフォームは特定のウェブリンクの処理に使用するデフォルトのアプリを決めることができ、ユーザーによるアプリの選択操作をスキップできます。この機能の実装方法については、アプリのリンク機能の処理をご覧ください。

アプリの自動バックアップ

アプリのフルデータ バックアップと自動復元が実行されるようになりました。この動作を有効にするには、アプリで Android 6.0(API レベル 23)を対象にする必要があります。追加のコードは必要ありません。ユーザーが Google アカウントを削除すると、バックアップ データも削除されます。この機能の仕組みとファイル システムでバックアップ対象を設定する方法については、アプリの自動バックアップの設定をご覧ください。

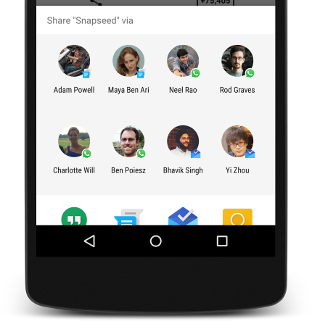

ダイレクト シェア

このリリースでは、ユーザーが直感的かつすばやく共有できるようにするための API が提供されます。アプリ内で特定のアクティビティを起動する直接共有ターゲットを定義できるようになりました。これらの直接共有ターゲットは、[共有] メニューを介してユーザーに公開されます。この機能を使うと、他のアプリ内にある連絡先などのターゲットにコンテンツを共有できます。たとえば、ダイレクト シェアのターゲットによって他のソーシャル ネットワーク アプリのアクティビティが起動し、そのアプリ内の特定の友人やコミュニティと直接コンテンツを共有できるようになります。

ダイレクト シェアのターゲットを有効にするには、ChooserTargetService クラスを拡張するクラスを定義する必要があります。マニフェストでサービスを宣言します。その宣言内で、BIND_CHOOSER_TARGET_SERVICE 権限と、SERVICE_INTERFACE アクションを使ったインテント フィルタを指定します。

次の例は、マニフェストで ChooserTargetService を宣言する方法を示しています。

<service android:name=".ChooserTargetService" android:label="@string/service_name" android:permission="android.permission.BIND_CHOOSER_TARGET_SERVICE"> <intent-filter> <action android:name="android.service.chooser.ChooserTargetService" /> </intent-filter> </service>

ChooserTargetService に公開するアクティビティごとに、アプリ マニフェストに "android.service.chooser.chooser_target_service" という名前の <meta-data> 要素を追加します。

<activity android:name=".MyShareActivity” android:label="@string/share_activity_label"> <intent-filter> <action android:name="android.intent.action.SEND" /> </intent-filter> <meta-data android:name="android.service.chooser.chooser_target_service" android:value=".ChooserTargetService" /> </activity>

音声インタラクション

このリリースでは、音声操作と共に使用することでアプリに対話形式の音声操作機能を組み込める新しい Voice Interaction API が提供されています。isVoiceInteraction() メソッドを呼び出して、音声操作によってアクティビティがトリガーされたかどうかを判別します。トリガーされていた場合は、アプリで VoiceInteractor クラスを使用してユーザーに音声の確認や、オプションのリストからの選択などを求めることができます。

ほとんどの音声操作は、ユーザーの音声操作から発生します。ただし、音声インタラクション アクティビティはユーザーの入力なしで開始されることもあります。たとえば、音声インタラクションを介して起動された他のアプリが、音声インタラクションを起動するインテントを送信することもあります。アクティビティがユーザーの音声クエリまたは他の音声インタラクション アプリから起動されたかどうかを判別するには、isVoiceInteractionRoot() メソッドを呼び出します。他のアプリがアクティビティを起動した場合、メソッドは false を返します。アプリは、このアクションが意図されたものであることを確認するようユーザーに求める場合があります。

音声操作の実装について詳しくは、音声操作のデベロッパー サイトをご覧ください。

Assist API

このリリースでは、アシスタントを介してユーザーがアプリを操作できる新しい方法が提供されています。この機能を使用するには、ユーザーがアシスタントを有効にして現在のコンテキストを使用できるようにする必要があります。有効にすると、ユーザーはホームボタンを長押しすることで、どのアプリ内でもアシスタントを呼び出すことができます。

FLAG_SECURE フラグを設定すると、アプリが現在のコンテキストをアシスタントと共有しないように選択できます。プラットフォームがアシスタントに渡す標準の情報セットに加えて、アプリは新しい AssistContent クラスを使用することで、追加情報を共有できます。

アプリから追加のコンテキストをアシスタントに提供するには、次のステップに従います。

Application.OnProvideAssistDataListenerインターフェースを実装します。registerOnProvideAssistDataListener()を使用してこのリスナーを登録します。- アクティビティ固有のコンテキスト情報を提供するには、

onProvideAssistData()コールバックと、必要に応じて新しいonProvideAssistContent()コールバックをオーバーライドします。

Adoptable Storage デバイス

このリリースでは、SD カードなどの外部ストレージ デバイスを追加できます。外部ストレージ デバイスを追加すると、デバイスが内部ストレージのように動作するよう暗号化とフォーマットが行われます。この機能により、ユーザーはストレージ デバイス間でアプリとアプリの個人データの両方を移動できます。アプリを移動する際、システムはマニフェストの android:installLocation を遵守します。

アプリが次の API やフィールドにアクセスする場合は、アプリが内部ストレージ デバイスと外部ストレージ デバイス間で移動する際に返されるファイルパスが動的に変化することに注意してください。ファイルパスの構築時は、これらの API を動的に呼び出すことを強くお勧めします。ハードコードされたファイル パスを使用したり、過去にビルドした完全修飾ファイルパスをそのまま使用したりしないでください。

Contextメソッド:ApplicationInfoフィールド:

この機能をデバッグするには、次のコマンドを実行することによって、USB On-The-Go(OTG)ケーブルを介して Android 端末に接続されている USB ドライブの追加を有効にします。

$ adb shell sm set-force-adoptable true

通知

このリリースでは、通知に関する以下の API の変更が追加されました。

- 新しいアラームのみの Do not disturb モードに対応する新しい

INTERRUPTION_FILTER_ALARMSフィルタ レベル。 - ユーザーがスケジュールしたリマインダーを他のイベント(

CATEGORY_EVENT)やアラーム(CATEGORY_ALARM)から区別するために使用される新しいCATEGORY_REMINDERカテゴリの値。 setSmallIcon()メソッドとsetLargeIcon()メソッドを使用して通知にアタッチできる新しいIconクラス。同様に、addAction()メソッドはドローアブル リソース ID の代わりにIconオブジェクトを受け入れるようになりました。- 現在アクティブな通知をアプリが見つけられるようにする新しい

getActiveNotifications()メソッド。

Bluetooth タッチペンのサポート

このリリースでは、Bluetooth タッチペンを使用したユーザー入力のサポートが強化されました。互換性のある Bluetooth タッチペンとスマートフォンやタブレットをペア設定して接続できます。接続されている間は、タッチ スクリーンからの位置情報とタッチペンからの筆圧やボタン情報を合わせることで、タッチ スクリーン単独の場合よりも表現の幅が大きく広がります。View.OnContextClickListener オブジェクトと GestureDetector.OnContextClickListener オブジェクトをアクティビティに登録すると、タッチペンのボタンが押されたことをアプリがリッスンし、次のアクションを実行できるようになります。

タッチペン ボタンの操作を検出するには、MotionEvent メソッドと定数を使用します。

- ユーザーがアプリの画面上のボタンでタッチペンに触れると、

getTooltype()メソッドはTOOL_TYPE_STYLUSを返します。 - Android 6.0(API レベル 23)をターゲットとするアプリの場合、ユーザーがプライマリ タッチペン ボタンを押すと、

getButtonState()メソッドはBUTTON_STYLUS_PRIMARYを返します。タッチペンにセカンダリ ボタンがある場合は、ユーザーがそのボタンを押したときに同じメソッドでBUTTON_STYLUS_SECONDARYが返されます。ユーザーが両方のボタンを同時に押した場合、メソッドは両方の値を OR で結合した値を返します(BUTTON_STYLUS_PRIMARY|BUTTON_STYLUS_SECONDARY)。 -

以前のプラットフォーム バージョンを対象としたアプリでは、

getButtonState()メソッドは、BUTTON_SECONDARY(プライマリ タッチペン ボタンが押されたとき)、BUTTON_TERTIARY(セカンダリ タッチペン ボタンが押されたとき)、またはその両方を返します。

Bluetooth Low Energy のスキャンの改善

アプリで Bluetooth Low Energy スキャンを実行する場合は、新しい setCallbackType() メソッドを使って、設定された ScanFilter に一致する広告パケットが最初に見つかったときと、長時間経過した後に見つかったときにコールバックを通知するようにシステムに指定します。このスキャン アプローチでは、以前のプラットフォーム バージョンで提供されていたアプローチよりも電力が効率的に使用されます。

Hotspot 2.0 Release 1 のサポート

このリリースでは、Nexus 6 および Nexus 9 端末での Hotspot 2.0 Release 1 仕様のサポートが追加されました。アプリで Hotspot 2.0 の認証情報をプロビジョニングするには、setPlmn() や setRealm() などの WifiEnterpriseConfig クラスの新しいメソッドを使用します。WifiConfiguration オブジェクトには、FQDN フィールドと providerFriendlyName フィールドを設定できます。新しい isPasspointNetwork() メソッドは、検出されたネットワークが Hotspot 2.0 のアクセス ポイントを表しているかどうかを示します。

4K ディスプレイ モード

プラットフォームでは、互換性のあるハードウェアでディスプレイ解像度を 4K レンダリングにアップグレードするようアプリからリクエストできるようになりました。現在の物理的解像度を照会するには、新しい Display.Mode API を使用します。UI が低い論理解像度で描画され、より大きな物理解像度にアップスケールされた場合、getPhysicalWidth() メソッドが返す物理解像度が、getSize() によって報告される論理解像度と異なる可能性があるので注意してください。

アプリ ウィンドウの preferredDisplayModeId プロパティを設定することで、アプリの実行時に物理的解像度を変更するようシステムに要求できます。この機能は、4K ディスプレイの解像度に切り替えたい場合に便利です。4K ディスプレイ モードの場合、UI は元の解像度(1080p など)でレンダリングされ、4K にアップスケールされますが、SurfaceView オブジェクトはネイティブ解像度でコンテンツを表示する場合があります。

テーマ設定可能な ColorStateLists

Android 6.0(API レベル 23)を搭載する端末で、テーマの属性が ColorStateList でサポートされるようになりました。Resources.getColorStateList() メソッドと Resources.getColor() メソッドのサポートが終了しました。これらの API を呼び出す場合は、代わりに新しい Context.getColorStateList() メソッドまたは Context.getColor() メソッドを呼び出します。これらのメソッドは、ContextCompat を介して v4 appcompat ライブラリでも使用できます。

音声機能

このリリースでは、次のように Android でのオーディオ処理が改善されました。

- 新しい

android.media.midiAPI を使った MIDI プロトコルのサポート。これらの API を使用して MIDI イベントを送受信できます。 - デジタル オーディオの録音および再生用のオブジェクトをそれぞれ作成し、システムのデフォルトをオーバーライドするオーディオ ソースとシンク プロパティを構成するための新しい

AudioRecord.BuilderクラスとAudioTrack.Builderクラス。 - オーディオと入力デバイスを関連付ける API フック。これは、ユーザーが Android TV に接続されているゲーム コントローラーやリモコンから音声検索を開始できるアプリの場合に特に便利です。ユーザーが検索を開始すると、システムが新しい

onSearchRequested()コールバックを呼び出します。ユーザーの入力デバイスにマイクが内蔵されているかどうかを確認するには、そのコールバックからInputDeviceオブジェクトを取得して、新しいhasMicrophone()メソッドを呼び出します。 - 現在システムに接続されているすべてのオーディオ機器のリストを取得できる新しい

getDevices()メソッド。オーディオ機器の接続時と接続解除時にアプリで通知を受けたい場合は、AudioDeviceCallbackオブジェクトを登録することもできます。

動画機能

このリリースでは、ビデオ処理の API に次のような新機能が追加されました。

- 新しい

MediaSyncクラスにより、アプリで音声ストリームと動画ストリームの同期レンダリングができるようになりました。オーディオ バッファはノンブロッキング方式で送信され、コールバック経由で返されます。動的な再生レートにも対応しています。 - アプリで開かれたセッションが、リソース マネージャーによって再要求されたことを示す新しい

EVENT_SESSION_RECLAIMEDイベント。アプリが DRM セッションを使用する場合は、再利用されたセッションを使用しないよう、このイベントを処理する必要があります。 - リソース マネージャーがコーデックで使用されたメディア リソースを再要求したことを示す新しい

ERROR_RECLAIMEDエラーコード。この例外では、コーデックはターミナル状態に移行しているため、解放する必要があります。 - サポートされている同時実行コーデック インスタンスの最大数のヒントを得られる新しい

getMaxSupportedInstances()インターフェース。 - 高速または低速モーション再生におけるメディアの再生レートを設定する新しい

setPlaybackParams()メソッド。ビデオと共にオーディオの再生を自動的に遅くしたり速くしたりもします。

カメラ機能

このリリースには、カメラのライトにアクセスし、カメラによる画像の再処理を行うための次の新しい API が含まれています。

Flashlight API

カメラデバイスにフラッシュ ユニットが付属している場合は、setTorchMode() メソッドを呼び出すことで、カメラデバイスを開かずにフラッシュ ユニットのタッチモードのオン / オフを切り替えることができます。アプリには、フラッシュ ユニットやカメラデバイスの排他的な所有権はありません。トーチモードは、カメラデバイスが利用不可になったときや、トーチをオンにしている他のカメラリソースが利用不可になったときにオフになり、利用できなくなります。他のアプリでも setTorchMode() を呼び出してトーチモードをオフにできます。最後にトーチモードをオンにしたアプリが閉じると、トーチモードはオフになります。

registerTorchCallback() メソッドを呼び出すことで、トーチモードのステータスに関する通知を受けるようコールバックを登録できます。コールバックを初めて登録すると、現在検知されているすべてのフラッシュ ユニット付きのカメラデバイスのトーチモードのステータスと共に即座に呼び出されます。トーチモードが正常にオンまたはオフになると、onTorchModeChanged() メソッドが呼び出されます。

Reprocessing API

Camera2 API は、YUV とプライベートな不透明形式の画像の再処理をサポートするよう拡張されました。これらの再処理機能が使用可能であるかどうかを判別するには、getCameraCharacteristics() を呼び出して REPROCESS_MAX_CAPTURE_STALL キーをチェックします。デバイスが再処理をサポートしている場合は、createReprocessableCaptureSession() を呼び出して再処理可能なカメラ撮影セッションを作成し、入力バッファの再処理の要求を作成できます。

入力バッファのフローをカメラの再処理入力に接続するには、ImageWriter クラスを使用します。空のバッファを取得するには、次のプログラミング モデルに従います。

dequeueInputImage()メソッドを呼び出します。- 入力バッファにデータを入力します。

queueInputImage()メソッドを呼び出して、バッファをカメラに送ります。

ImageWriter オブジェクトを PRIVATE 画像と共に使用する場合、アプリから直接画像データにアクセスすることはできません。代わりに、バッファコピーなしで queueInputImage() メソッドを呼び出して、PRIVATE 画像を直接 ImageWriter に渡します。

ImageReader クラスが PRIVATE 形式の画像ストリームをサポートするようになりました。これにより、アプリは ImageReader 出力画像の循環的な画像キューを維持し、1 つ以上の画像を選択して、カメラの再処理用に ImageWriter に送信できます。

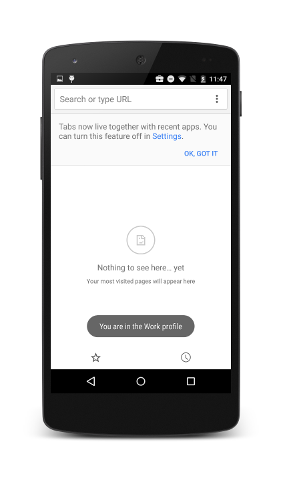

Android for Work の機能

このリリースには、次のような Android for Work 用の新しい API が含まれています。

- 企業の専用端末の制御の強化: デバイス オーナーは次の設定を制御でき、企業の専用(COSU)端末を管理しやすくなります。

setKeyguardDisabled()メソッドを使ってキーガードを無効または再度有効にします。setStatusBarDisabled()メソッドを使ったステータスバー(クイック設定、通知、Google Now を起動するナビゲーション スワイプアップ操作を含む)の無効化と有効化。UserManager定数DISALLOW_SAFE_BOOTを使用してセーフブートを無効化または有効化します。STAY_ON_WHILE_PLUGGED_IN定数を使った電源接続時の画面オフの回避。

- デバイス所有者によるアプリのサイレント インストールとアンインストール: デバイス所有者は、Google Play for Work から独立して、

PackageInstallerAPI を使用して、アプリのサイレント インストールとアンインストールを行えるようになりました。デバイス オーナー経由で、ユーザー操作なしでアプリを取得したりインストールしたりできる端末を提供できるようになりました。この機能は、Google アカウントのアクティベートなしでキオスクや同様の端末のワンタッチ プロビジョニングを有効にする際に便利です。 - サイレント企業証明書アクセス: アプリが

choosePrivateKeyAlias()を呼び出すときに、ユーザーが証明書の選択を求められる前に、プロファイルまたはデバイス オーナーがonChoosePrivateKeyAlias()メソッドを呼び出して、リクエスト元のアプリケーションにエイリアスをサイレントに提供できるようになりました。この機能によって、ユーザー操作なしでマネージド アプリが証明書にアクセスできるようになります。 - システム アップデートの自動承認。

setSystemUpdatePolicy()を使ってシステム アップデートのポリシーを設定することによって、デバイス オーナーはキオスク端末などでシステム アップデートが自動的に適用されるようにしたり、ユーザーが操作しないようアップデートを最大 30 日間保留したりできます。さらに、管理者は更新を行う必要がある毎日の時間枠を設定できます(キオスク デバイスが使用されていない時間帯など)。利用可能なシステム アップデートがある場合、システムは Device Policy Controller アプリにシステム アップデートのポリシーがあるかどうかを確認し、それに基づいて動作します。 -

委任証明書のインストール: プロファイルまたはデバイス オーナーは、サードパーティ アプリに次の

DevicePolicyManager証明書管理 API を呼び出す権限を付与できるようになりました。 - データ使用量のトラッキング: プロファイルまたはデバイス オーナーは、新しい

NetworkStatsManagerメソッドを使用して、[設定] > [データの使用] に表示されるデータ使用統計情報をクエリできるようになりました。プロファイル オーナーには管理するプロファイルのデータを照会するためのパーミッションが自動的に付与され、デバイス オーナーには管理されるプライマリ ユーザーの使用データへのアクセス権が付与されます。 - 実行時の権限の管理:

プロファイルまたはデバイス オーナーは、

setPermissionPolicy()を使用するすべてのアプリケーションのすべての実行時のリクエストに対する権限ポリシーを設定でき、ユーザーに権限を付与するよう求める、自動的に付与する、権限をサイレントに拒否する、のいずれかを行うことができます。後者のポリシーが設定されている場合、ユーザーはプロファイル オーナーやデバイス オーナーによって選択された内容を [設定] にあるアプリのパーミッション画面で変更できません。 - 設定の VPN: VPN アプリは、[設定] > [その他] > [VPN] に表示されます。さらに、VPN の使用に関する通知は、その VPN の構成状況に固有の内容になります。プロファイル オーナーの場合、通知は VPN が マネージド プロファイル、個人プロファイル、または両方のどれに構成されているかによって、固有のものになります。デバイス オーナーの場合、通知は VPN が端末全体に構成されているかどうかに応じて、固有のものになります。

- 仕事のステータス通知: マネージド プロファイルのアプリのアクティビティがフォアグラウンドにある場合は、ステータスバーのブリーフケース アイコンが表示されます。さらに、端末がマネージド プロファイルのアプリのアクティビティに対して直接アンロックされている場合、ユーザーが仕事用プロファイル内にいることがトースト通知で表示されます。