ML Kit 是行動 SDK,可將 Google 的機器學習專業知識整合至 Android 和 Android 應用程式,提供功能強大且易於使用的套件。無論您是機器學習新手還是老手,只要加入幾行程式碼,就能輕鬆實作所需功能。您不需要具備神經網路或模型最佳化方面的專業知識,就能開始使用。

運作方式

ML Kit 將 Google 的機器學習技術 (例如 Mobile Vision 和 TensorFlow Lite) 整合至單一 SDK 中,方便您輕鬆將機器學習技術運用在自家應用程式中。無論您需要 Mobile Vision 裝置端模型的即時功能,還是自訂 TensorFlow Lite 模型的彈性,只要編寫幾行程式碼,ML Kit 就能提供這些功能。

本程式碼研究室將逐步說明如何在現有 Android 應用程式中,透過即時攝影機畫面新增文字辨識、語言辨識和翻譯功能。本程式碼研究室也會說明如何搭配使用 CameraX 和 ML Kit API 的最佳做法。

建構目標

在本程式碼研究室中,您將使用 ML Kit 建構 Android 應用程式。您的應用程式會使用 ML Kit Text Recognition 裝置端 API,從即時攝影機畫面中辨識文字。它會使用 ML Kit Language Identification API 來識別辨識文字的語言。最後,應用程式會使用 ML Kit Translation API,將這段文字翻譯成 59 種選項中的任一語言。

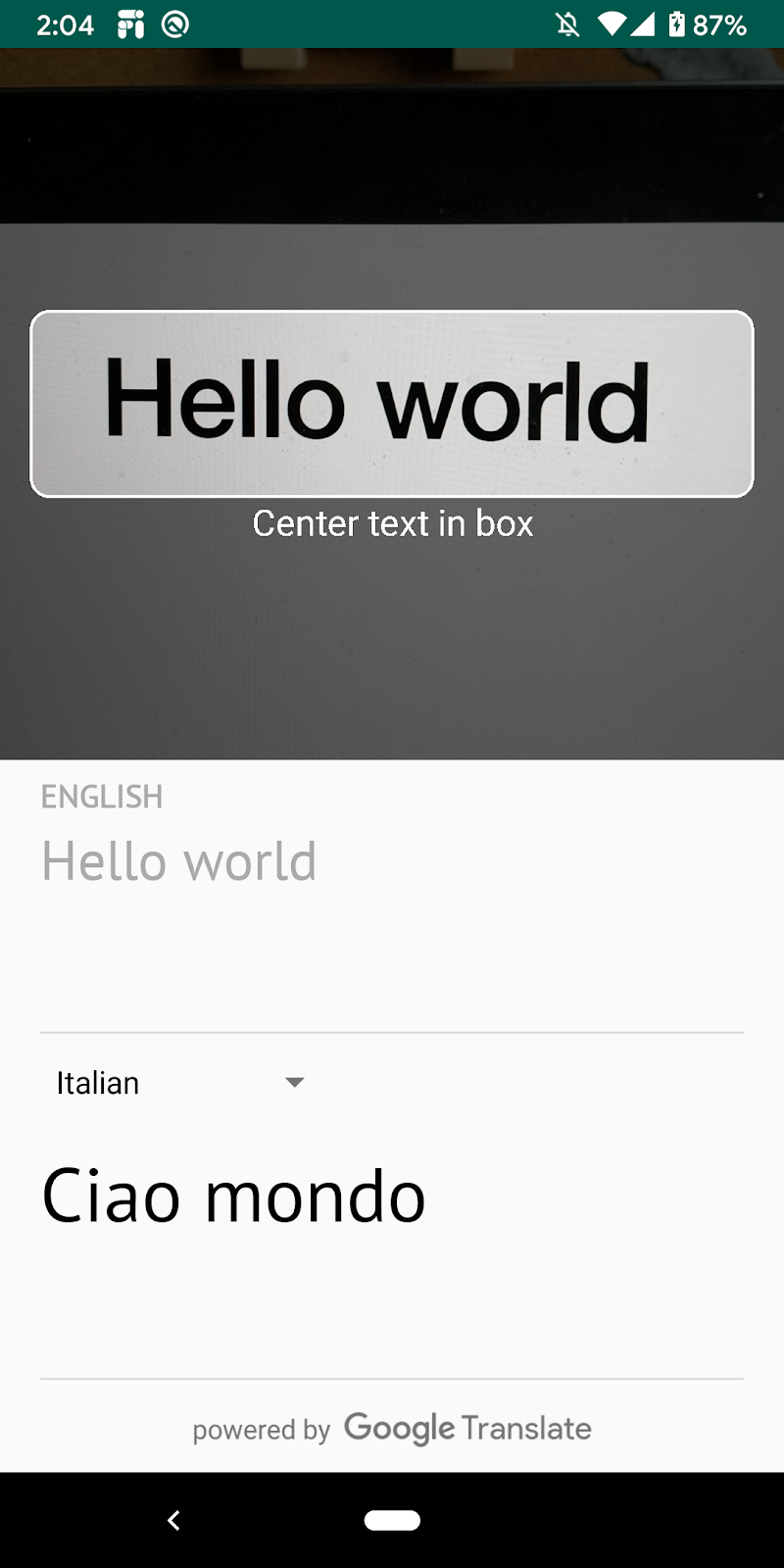

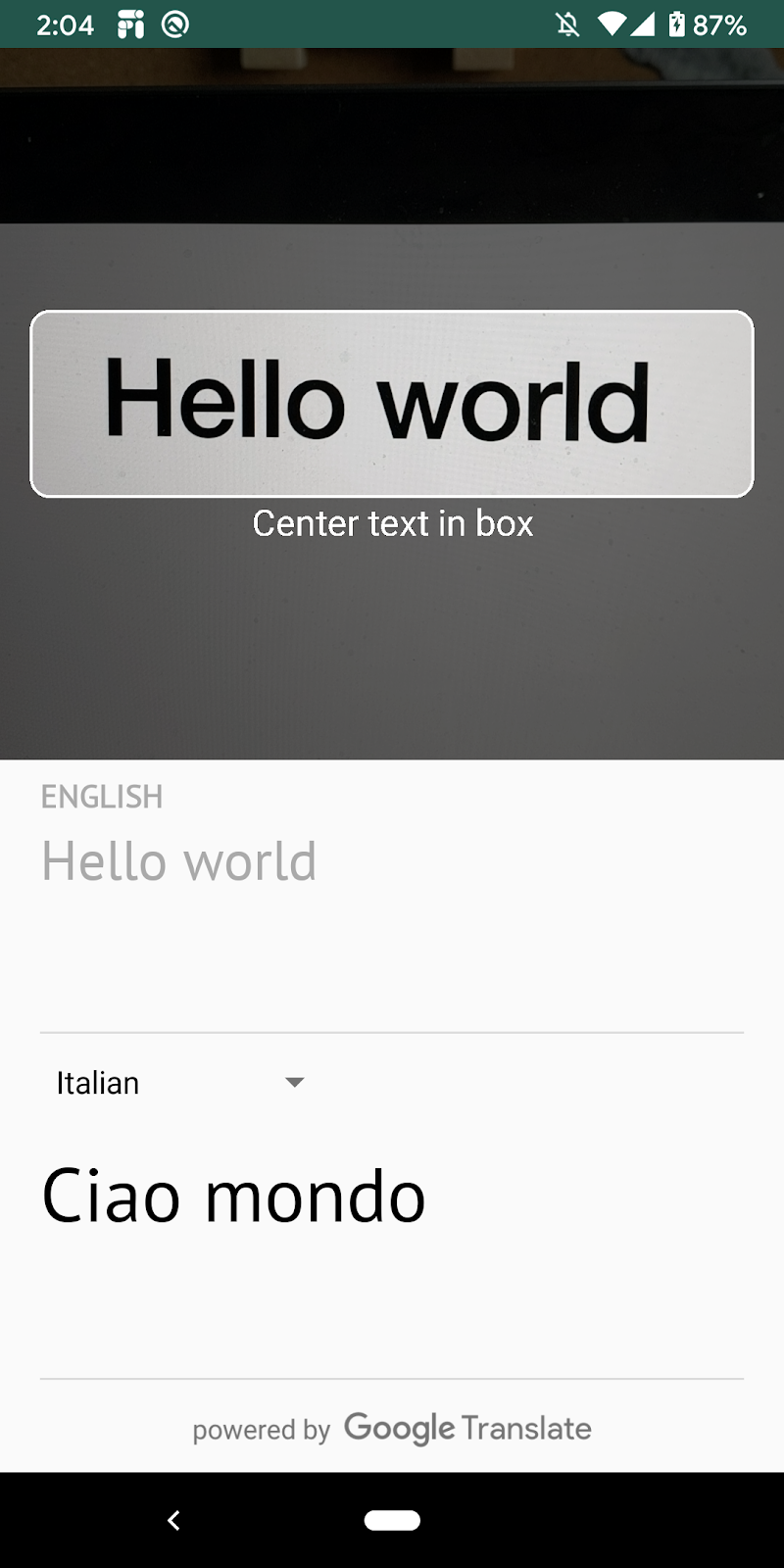

最後,您應該會看到類似下圖的畫面。

課程內容

- 如何使用 ML Kit SDK,輕鬆為任何 Android 應用程式新增機器學習功能。

- ML Kit 文字辨識、語言辨識、翻譯 API 及其功能。

- 如何搭配 ML Kit API 使用 CameraX 程式庫。

軟硬體需求

- 最新版 Android Studio (4.0 以上版本)

- 實體 Android 裝置

- 程式碼範例

- 對 Kotlin 中的 Android 開發作業有基本瞭解

本程式碼研究室著重於 ML Kit。我們已提供與本主題無關的概念和程式碼區塊,並為您實作。

下載程式碼

點選下方連結即可下載這個程式碼研究室的所有程式碼:

將下載的 ZIP 檔案解壓縮。這會解壓縮根目錄 (mlkit-android),其中包含您需要的所有資源。在本程式碼研究室中,您只需要 translate 子目錄中的資源。

mlkit-android 存放區中的 translate 子目錄包含下列目錄:

starter:您在本程式碼研究室中建構的範例程式碼。

starter:您在本程式碼研究室中建構的範例程式碼。

在 app/build.gradle 檔案中,確認已納入必要的 ML Kit 和 CameraX 依附元件:

// CameraX dependencies

def camerax_version = "1.0.0-beta05"

implementation "androidx.camera:camera-core:${camerax_version}"

implementation "androidx.camera:camera-camera2:${camerax_version}"

implementation "androidx.camera:camera-lifecycle:$camerax_version"

implementation "androidx.camera:camera-view:1.0.0-alpha12"

// ML Kit dependencies

implementation 'com.google.android.gms:play-services-mlkit-text-recognition:16.0.0'

implementation 'com.google.mlkit:language-id:16.0.0'

implementation 'com.google.mlkit:translate:16.0.0'您已將專案匯入 Android Studio,並檢查 ML Kit 依附元件,現在可以首次執行應用程式了!啟動 Android Studio 模擬器,然後按一下 Android Studio 工具列中的「Run」圖示 ( )。

)。

應用程式應會在裝置上啟動,你可以將相機對準各種文字,查看即時影像,但文字辨識功能尚未實作。

在這個步驟中,我們會為應用程式新增功能,以便從攝影機辨識文字。

將 ML Kit 文字偵測器例項化

將下列欄位新增至 TextAnalyzer.kt 頂端。這就是取得文字辨識器句柄的方式,可在後續步驟中使用。

TextAnalyzer.kt

private val detector = TextRecognition.getClient()在 Vision 圖片 ( 使用相機緩衝區建立) 上執行裝置端文字辨識功能

CameraX 程式庫會提供相機的圖片串流,以便進行圖片分析。替換 TextAnalyzer 類別中的 recognizeTextOnDevice() 方法,以便在每個影像影格上使用 ML Kit 文字辨識功能。

TextAnalyzer.kt

private fun recognizeTextOnDevice(

image: InputImage

): Task<Text> {

// Pass image to an ML Kit Vision API

return detector.process(image)

.addOnSuccessListener { visionText ->

// Task completed successfully

result.value = visionText.text

}

.addOnFailureListener { exception ->

// Task failed with an exception

Log.e(TAG, "Text recognition error", exception)

val message = getErrorMessage(exception)

message?.let {

Toast.makeText(context, message, Toast.LENGTH_SHORT).show()

}

}

}

以下行程式碼顯示如何呼叫上述方法,開始執行文字辨識。在 analyze() 方法的結尾處加入下列指令行。請注意,在圖片分析完成後,您必須呼叫 imageProxy.close,否則即時攝影機動態饋給將無法處理其他圖片進行分析。

TextAnalyzer.kt

recognizeTextOnDevice(InputImage.fromBitmap(croppedBitmap, 0)).addOnCompleteListener {

imageProxy.close()

}在裝置上執行應用程式

接著,請在 Android Studio 工具列中按一下「Run」圖示 ( )。應用程式載入後,應會開始即時辨識攝影機畫面中的文字。將相機鏡頭對準任何文字即可確認。

)。應用程式載入後,應會開始即時辨識攝影機畫面中的文字。將相機鏡頭對準任何文字即可確認。

將 ML Kit 語言 ID 例項化

在 MainViewModel.kt 中新增下列欄位。這是取得語言 ID 的句柄,以便在後續步驟中使用。

MainViewModel.kt

private val languageIdentification = LanguageIdentification.getClient()對偵測到的文字執行裝置端語言辨識

使用 ML Kit 語言辨識器,取得圖片中偵測到的文字語言。

請將 MainViewModel.kt 中 sourceLang 欄位定義中的 TODO 替換為下列程式碼。這個程式碼片段會呼叫語言辨識方法,並指派結果 (如果未定義為「und」)。

MainViewModel.kt

languageIdentification.identifyLanguage(text)

.addOnSuccessListener {

if (it != "und")

result.value = Language(it)

}在裝置上執行應用程式

接著,請在 Android Studio 工具列中按一下「Run」圖示 ( )。應用程式載入後,應開始從攝影機辨識文字,並即時辨識文字的語言。將相機鏡頭對準任何文字即可確認。

)。應用程式載入後,應開始從攝影機辨識文字,並即時辨識文字的語言。將相機鏡頭對準任何文字即可確認。

請將 MainViewModel.kt 中的 translate() 函式替換為以下程式碼。這個函式會使用原文語言值、目標語言值和原文,執行翻譯作業。請注意,如果所選目標語言模型尚未下載到裝置上,我們會呼叫 downloadModelIfNeeded() 來下載,然後繼續進行翻譯。

MainViewModel.kt

private fun translate(): Task<String> {

val text = sourceText.value

val source = sourceLang.value

val target = targetLang.value

if (modelDownloading.value != false || translating.value != false) {

return Tasks.forCanceled()

}

if (source == null || target == null || text == null || text.isEmpty()) {

return Tasks.forResult("")

}

val sourceLangCode = TranslateLanguage.fromLanguageTag(source.code)

val targetLangCode = TranslateLanguage.fromLanguageTag(target.code)

if (sourceLangCode == null || targetLangCode == null) {

return Tasks.forCanceled()

}

val options = TranslatorOptions.Builder()

.setSourceLanguage(sourceLangCode)

.setTargetLanguage(targetLangCode)

.build()

val translator = translators[options]

modelDownloading.setValue(true)

// Register watchdog to unblock long running downloads

Handler().postDelayed({ modelDownloading.setValue(false) }, 15000)

modelDownloadTask = translator.downloadModelIfNeeded().addOnCompleteListener {

modelDownloading.setValue(false)

}

translating.value = true

return modelDownloadTask.onSuccessTask {

translator.translate(text)

}.addOnCompleteListener {

translating.value = false

}

}

在模擬器上執行應用程式

接著,請在 Android Studio 工具列中按一下「Run」圖示 ( )。應用程式載入後,畫面應會顯示下方的動畫圖片,顯示文字辨識和識別的語言結果,以及翻譯成所選語言的文字。你可以選擇任一 59 種語言。

)。應用程式載入後,畫面應會顯示下方的動畫圖片,顯示文字辨識和識別的語言結果,以及翻譯成所選語言的文字。你可以選擇任一 59 種語言。

恭喜!您剛剛使用 ML Kit 將裝置端文字辨識、語言辨識和翻譯功能加入應用程式!你現在可以從即時攝影機影像中辨識文字和語言,並即時將文字翻譯成所選語言。

涵蓋內容

- 如何將 ML Kit 新增至 Android 應用程式

- 如何使用 ML Kit 中的裝置端文字辨識功能,辨識圖片中的文字

- 如何使用 ML Kit 中的裝置端語言辨識功能,識別文字的語言

- 如何使用 ML Kit 中的裝置端翻譯功能,將文字動態翻譯成 59 種語言

- 如何搭配使用 CameraX 和 ML Kit API

後續步驟

- 在自家 Android 應用程式中使用 ML Kit 和 CameraX!