Google アシスタントを使用すると、Android アプリを音声で操作できます。使用 ユーザーは、Google アシスタントを使用して、アプリの起動、タスクの実行、コンテンツへのアクセスなど、 次のような音声コマンドを使用できます。「OK Google, ランニングを開始 サンプルアプリ」

Android デベロッパーは、Google アシスタントの開発フレームワークを使用して、 テストツールを使用して、Google Cloud 上の モバイル デバイス、自動車、ウェアラブルなどの Android ベースのサーフェス。

App Actions

アシスタントの App Actions を使用すると、ユーザーは Google アシスタントを使って できます。

App Actions を使用すると、より深い音声操作が可能になり、ユーザーはアプリを起動して 次のようなタスクを実行できます。

- アシスタントから機能を起動する: アプリの機能を ユーザークエリをマッチングすることで、ユーザークエリの数を削減できます。

- Google サーフェスにアプリ情報を表示する: アシスタントが表示する Android ウィジェット、インラインで回答を提供する、シンプル 確認、ユーザーへの簡単なやり取りなどで、コンテキストを変化させることなく、

- アシスタントから音声ショートカットを提案する: アシスタントを使って自主的に操作する 適切なコンテキストでタスクを提案してユーザーに見つけてもらうか、もう一度プレイできるようにします。

App Actions は、組み込みインテント(BII)を使用して、これらを含むさまざまな用途を実現します 一般的なタスクカテゴリで分類できます。App Actions をご覧ください。 アプリで BII をサポートする方法の詳細については、このページの概要をご覧ください。

マルチデバイス開発

App Actions を使用すると、デバイス サーフェスを音声で操作して操作できます モバイルの枠を超えてたとえば、自動車のユースケースに最適化された BII を使用すると、 音声で次の操作を行うことができます。

App Actions の概要

App Actions を使用して、アプリをより細かく音声操作できるようにする アプリの特定のタスクを音声で実行できます。もし ユーザーがアプリをインストールしたら、フレーズを使ってその意図を伝えることができます。 (「OK Google, エクササイズを始めて」など) サンプルアプリ」App Actions は、BII によってユーザーの一般的な利用方法をモデル化し、 次のような、行いたいタスクや求めている情報を表します。

- エクササイズの開始、メッセージの送信、その他のカテゴリ固有のアクション。

- アプリの機能を開く。

- アプリ内検索を使用した商品やコンテンツのクエリ。

App Actions を使用すると、アシスタントはユーザーの状況に基づき、音声ケーパビリティをショートカットとして事前にユーザーに提示できます。この機能により、ユーザーは App Actions を簡単に見つけてリプレイできます。また、これらを App Actions のアプリ内プロモーション SDK を使って、アプリのショートカットを作成できます。

App Actions のサポートを有効にするには、shortcuts.xml で <capability> タグを宣言します。ケーパビリティは、アプリ内機能をどのように提供するかを Google に伝える

アクセスできるようにし、機能に対する音声サポートを有効にします。

アシスタントは、指定されたコンテンツまたはアクションでアプリを起動して、ユーザーのインテントを遂行します。ユースケースによっては、Android ウィジェットを指定してアシスタント内に表示し、ユーザークエリを遂行できます。

App Actions は、Android 5(API レベル 21)以上でサポートされています。ユーザーは Android スマートフォンでのみアプリ アクションにアクセスできます。Android Go のアシスタントは、App Actions をサポートしていません。

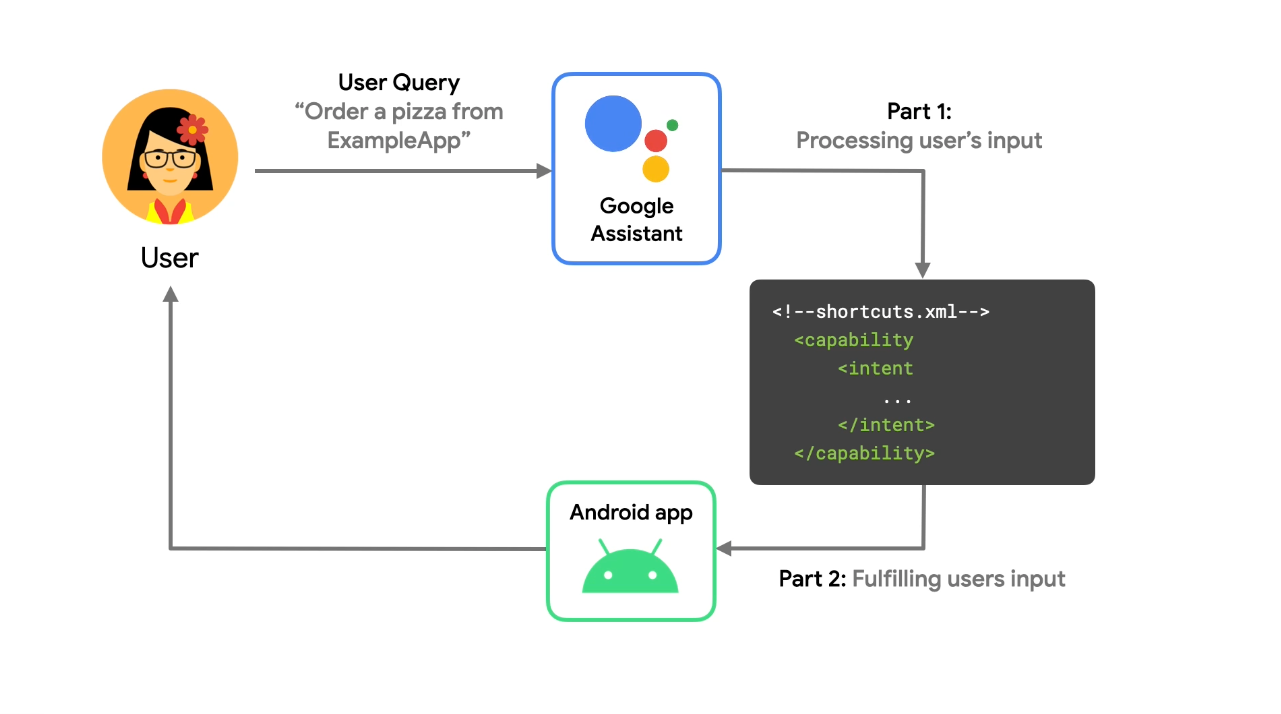

App Actions の仕組み

App Actions は、アプリ内機能をアシスタントに拡張し、ユーザーが音声でアプリの機能を利用できるようにします。ユーザーが App Action を呼び出すと

アシスタントが、shortcuts.xml リソースで宣言された BII とクエリを照合します。

リクエストされた画面でアプリを起動するか、Android ウィジェットを表示する。

アプリで BII を宣言するには、Android のケーパビリティ要素を使用します。ユーザーが Google Play Console を使用してアプリをアップロードすると、 宣言して、ユーザーがその機能にアクセスできるようにします。 操作できます。

たとえば、アプリでエクササイズを開始する機能を提供できます。 ユーザーが「OK Google, Example App でランニングを開始」と話しかけると、次のように応答します。 次の処理が行われます。

- アシスタントは、クエリに対して自然言語分析を実行し、リクエストのセマンティクスを BII の事前定義済みパターンと照合します。この例では

BII「

actions.intent.START_EXERCISE」がクエリに一致します。 - BII が以前にアプリに登録されたかどうかをアシスタントが確認する その構成を使用して起動方法を決定します

- アシスタントが、アプリ内のデスティネーションを起動する Android インテントを生成します。

<capability>で指定した情報を使用して、Google Cloud API リクエストを実行します。アシスタントは、クエリのパラメータを抽出し、生成された Android インテントにエクストラとして渡します。 - アシスタントは、生成された Android インテントを起動して、ユーザー リクエストを遂行します。

intentを構成して、アプリで画面を起動するか、 Google アシスタント内にウィジェットを表示します。

ユーザーがタスクを完了した後、 Google Shortcuts Integration Library: そのアクションとそのパラメータを Google に付与し、 ショートカットを表示させることができます。

このライブラリを使用すると、ショートカットを検出して再生できるようになります アシスタントなどの Google サーフェス。たとえば、ショートカットを ユーザーがライドシェアリング アプリでリクエストした各目的地の Google クイックリプレイがショートカットの候補として表示されます。

App Actions を作成する

App Actions は、Android アプリの既存の機能の上に作成されます。作成手順は、実装するどの App Actions でも大きな違いはありません。App Actions は、shortcuts.xml で指定した capability 要素を使用して、アプリ内の特定のコンテンツまたは機能にユーザーを直接誘導します。

App Action を作成する際は、まず、ユーザーがアシスタントからアクセスできるようにしたいアクティビティを特定します。次に、その情報を使用して、App Actions の BII リファレンスから、最も近い BII を見つけます。

BII は、ユーザーがアプリで遂行したいタスクや探している情報を表現する一般的な方法をモデル化したものです。たとえば BII は、 アクション(エクササイズの開始、メッセージの送信、 アプリBII は一般的なモデルであるため、App Actions の使用を開始する際は BII が最適 ユーザーの検索語句のバリエーションを多言語で表示できるため、 アプリをすばやく音声で有効にできます。

実装するアプリ内機能と BII を特定したら、その BII をアプリの機能にマッピングする Android アプリの shortcuts.xml リソース ファイルを追加または更新します。shortcuts.xml で capability 要素として定義する App Actions では、各 BII がフルフィルメントを解決する方法と、どのパラメータが抽出されてアプリに渡されるかを記述します。

App Actions の開発の大部分は、BII のパラメータを、定義されたフルフィルメントにマッピングする作業です。通常、このプロセスは、アプリ内機能の要求される入力を BII のセマンティック パラメータにマッピングする形で行われます。

App Actions をテストする

開発時とテスト時は、Android Studio 用の Google アシスタント プラグインを使用して、アシスタントに App Actions のプレビュー(自分の Google アカウント用)を作成します。このプラグインを使用すると、デプロイに向けてアプリを送信する前に、App Action でさまざまなパラメータがどのように処理されるかをテストできます。このテストツールで App Action のプレビューを生成したら、テストツール ウィンドウから直接テストデバイスで App Action をトリガーできます。

メディアアプリ

アシスタントには、メディアアプリのコマンドを理解するための機能も搭載されています。たとえば、 「OK Google, ビヨンセの曲を再生して」と話しかけ、 一時停止、スキップ、早送り、高く評価。

次のステップ

App Actions のパスウェイに沿って、サンプルを使用して App Action を作成する Android アプリその後、Google のガイドである 自分のアプリ用の App Actions を作成します。また、 以下の追加リソースを参照してください。

- GitHub でフィットネス Android アプリのサンプルをダウンロードして内容を調べます。

- r/GoogleAssistantDev: Google アシスタントを使用するデベロッパー向けの Reddit の公式コミュニティ。

- App Actions のプログラミングに関する質問がある場合は、「android」タグと「app-actions」タグを使用して、Stack Overflow に投稿してください。投稿する前に、質問がトピックに沿っていることを確認し、適切な質問のしかたに関するガイダンスをお読みください。

- 公開されている Issue Tracker で、App Actions の機能に関するバグと一般的な問題を報告します。